Backpressure marks the point where a boundary reaches capacity and upstream systems have to adjust.

Distributed systems tend to change shape under load. Queues extend and latency widens. Retries compound. Humans step in to compensate. What emerges over time is a system that no longer behaves as designed.

Backpressure addresses that shift. In networking it appears as TCP flow control. In streaming systems it propagates when consumers cannot keep up. In operating systems it shows up as bounded buffers and blocking writes. The principle is consistent: when a downstream component reaches capacity, it signals upstream and constrains further input.

Many production stacks implement elements of this idea. It is less common to elevate it to a core design primitive.

Capacity belongs to the boundary between services rather than to a service in isolation. An HTTP handler can accept requests faster than its database can commit writes. A worker pool can drain a queue faster than an external API can respond. A deployment pipeline can generate artifacts faster than they can be verified. In each case, overload emerges at the seam.

When the boundary does not express its limits explicitly, the system invents its own constraints. Memory fills. Retries amplify load. Operators intervene manually. These are implicit forms of backpressure that surface late and carry high cost.

A boundary that encodes capacity directly changes the behavior of the entire graph. Rate limits, bounded queues, semaphore guards, and admission controllers make limits visible. They require producers to negotiate with consumers before more work enters the system. That visibility allows operators to decide how demand should be handled.

Buffers smooth bursts. They also obscure steady-state imbalance.

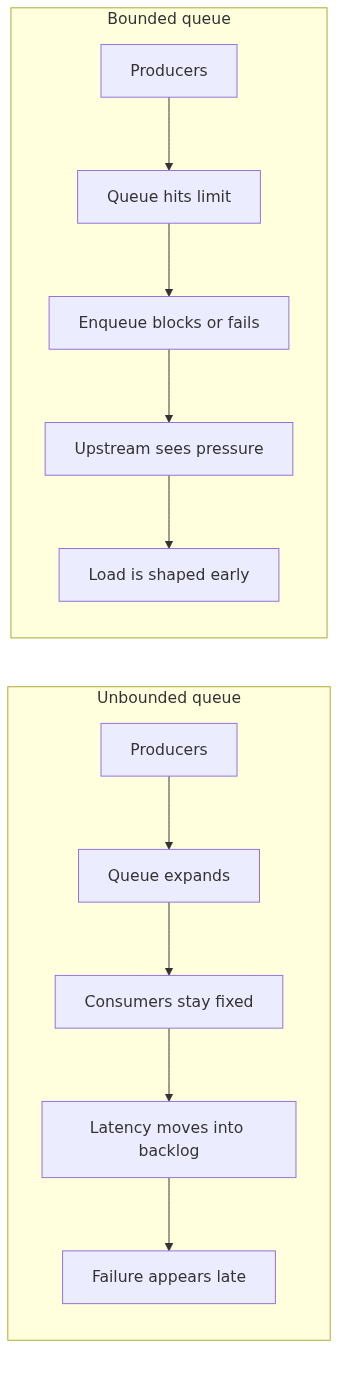

Consider a job queue without an enforced upper bound. Producers enqueue aggressively. Consumers process at a fixed rate. When demand exceeds throughput, the queue grows. Nothing fails immediately. Metrics show acceptable response times at the edge because the system acknowledges receipt before work completes.

The queue becomes a reservoir of deferred failure. Latency shifts from the request path to waiting time. If downstream capacity remains unchanged, recovery requires draining a backlog while new work continues to arrive.

A bounded queue changes the dynamics. When full, enqueue operations block or fail. Upstream systems receive an immediate signal. Retries become visible. Operators see saturation as it happens. Stress is recorded at the boundary instead of accumulating internally.

One useful way to look at the difference is to ask where the system records pressure:

Backpressure gains strength when it propagates transitively. If Service C slows, Service B must experience that slowdown, and Service A must feel it through B. Otherwise A continues emitting load into a bottleneck it cannot observe.

Propagation requires coordination. Timeouts must align with realistic service times. Retry policies must respect upstream limits. Circuit breakers must integrate with rate limiting rather than bypass it. When these mechanisms are misaligned, each layer compensates independently and contention increases.

A clear contract helps: each boundary exposes its current capacity and enforces it consistently. When downstream slows, upstream throughput decreases in response. The system settles into a new operating range defined by the constrained edge. Overload may still occur, but it is expressed coherently.

Capacity constraints encode cost. A saturated dependency consumes scarce resources: CPU, memory, connection pools, human attention. When backpressure is explicit, those constraints can be reflected in policy.

Rate limits per tenant translate infrastructure scarcity into allocation rules. Priority queues express differentiated service levels. Admission control enforces budgets at the perimeter. These mechanisms convert capacity into allocation decisions.

Without explicit backpressure, cost accounting distorts. A single aggressive client can displace others without visibility. Latency increases across the board. Engineers investigate symptoms while the source remains obscured.

When overload is shaped at the boundary, trade-offs become concrete. A team might add workers, enlarge the database, lower request rate, or change priority. Each option changes how demand interacts with capacity. The resulting feedback is easier to interpret under sustained load.

Operational teams behave like distributed systems. Incoming requests accumulate in ticket queues. Deployment pipelines buffer pending changes. Incident channels absorb unresolved alerts.

Unbounded buffers allow organizations to absorb overload silently. Engineers work longer hours. Context switching increases. Quality degrades. Dashboards rarely reflect this cost.

Introducing backpressure at organizational boundaries can be mechanical. Limit work in progress. Enforce review capacity before accepting new tasks. Gate deployments on explicit approval throughput. These are bounded queues applied to human systems.

When intake is constrained by review capacity, the organization feels its limits immediately. Stakeholders choose between delay and reprioritization. Trade-offs surface earlier. Stress appears at the point of entry rather than later in the delivery cycle.

There is a practical reason to saturate early. When boundaries enforce limits directly, constraints show up in a place operators can actually inspect. Gradual degradation without signaling tends to blur the picture. Users adapt to latency and inconsistency until a threshold is crossed, often unpredictably.

Designing for early saturation involves:

Each mechanism introduces friction. That friction shapes traffic to match capacity.

Early saturation also narrows incident scope. When boundaries enforce limits, overload in one region is less likely to cascade into others. Operators can identify the constrained edge and adjust either capacity or demand.

Treating backpressure as a primitive requires instrumentation at boundaries. Metrics should include queue depth, rejection rate, time spent blocked on semaphores, and retry amplification factors. These indicators describe proximity to the system’s capacity envelope.

Dashboards that focus solely on request latency and error rate miss the buildup phase. By the time errors spike, queues may already be deep and recovery slow.

Observability tied to boundaries supports proactive scaling. If rejection rates trend upward during predictable peaks, capacity can be adjusted ahead of failure. If specific clients frequently hit limits, allocation policies can be revisited. In practice, the boundary is often the easiest place to inspect what the system is doing.

Many systems are optimized to absorb load. Autoscaling groups expand. Caches mask slow dependencies. Retries hide transient errors. These techniques help with short bursts, but they can conceal persistent imbalance.

Shaping load through backpressure changes the operating model. Intake is constrained by sustainable throughput. Scaling decisions follow observed saturation and the capacity data gathered at the boundary. Over time, throughput aligns more closely with actual capacity. Latency remains bounded, and queues are more likely to reflect temporary bursts than chronic backlog.